Delivering value to users requires more than just writing code. It demands a structured approach to quality assurance and process consistency. A Definition of Done (DoD) serves as the foundation for this consistency. Without it, teams often face ambiguity regarding what constitutes a completed task. This ambiguity leads to technical debt, inconsistent releases, and frustrated stakeholders. When implemented correctly, a robust DoD streamlines user story delivery and ensures that every increment moving through the pipeline meets the necessary standards.

This guide explores how to construct a Definition of Done that genuinely supports the delivery of user stories. We will examine the nuances of quality gates, the distinction between DoD and acceptance criteria, and the practical steps to embed this practice into your workflow. By focusing on these elements, teams can improve velocity while maintaining high standards.

🧩 Understanding the Definition of Done

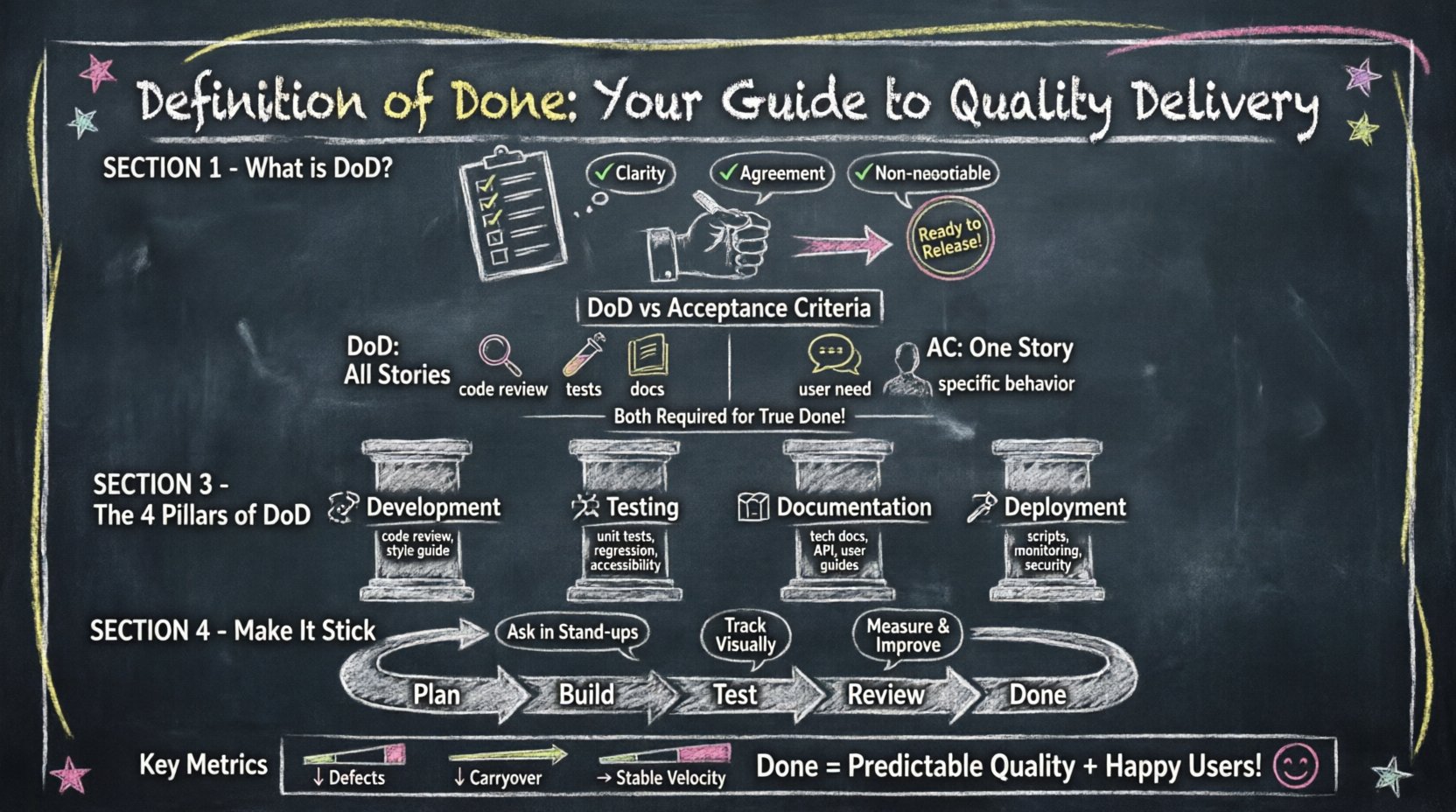

A Definition of Done is a shared understanding of what it means for a work item to be complete. It is not a suggestion; it is a requirement. When a user story reaches this state, the team agrees it is ready for release or deployment. This definition acts as a checklist that must be satisfied before the story can be moved to the “Done” column in a workflow board.

Many teams confuse the DoD with individual task requirements. However, the DoD is universal for all items within a specific context. It applies to every user story, bug fix, or technical spike within the sprint. This universality is what creates predictability.

Key characteristics of a strong Definition of Done include:

- Clarity: Every team member understands the criteria without ambiguity.

- Agreement: The entire team, including stakeholders, agrees on the standards.

- Measurability: It is possible to verify whether the criteria are met.

- Non-negotiable: Items cannot be considered done unless all criteria are met.

Without these characteristics, the Definition of Done becomes a theoretical exercise rather than a practical tool. It must be actionable during daily stand-ups and sprint reviews. If a story is marked as done but fails to meet the DoD, the integrity of the sprint is compromised.

⚖️ DoD vs. Acceptance Criteria

One of the most common points of confusion in agile delivery is the difference between the Definition of Done and Acceptance Criteria. While both relate to quality, they serve different purposes. Understanding this distinction is vital for accurate planning and execution.

Acceptance Criteria are specific to a single user story. They define the behavior and functionality required to satisfy a specific user need. For example, a user story might state that “The user must be able to reset their password via email.” The acceptance criteria would detail the exact email content, the link expiration time, and the success message displayed.

Definition of Done applies to all stories. It covers the quality standards that apply regardless of the feature being built. This includes code reviews, unit tests, documentation updates, and security checks.

To clarify the relationship, consider the following comparison:

| Feature | Definition of Done (DoD) | Acceptance Criteria (AC) |

|---|---|---|

| Scope | Applies to every story in the sprint | Applies to specific stories only |

| Purpose | Ensures quality and release readiness | Ensures specific user needs are met |

| Example | Code reviewed, unit tests passed | Password reset link expires in 24 hours |

| Flexibility | Consistent across the team | Varies by feature requirements |

When these two concepts are conflated, teams may end up with stories that function correctly but are not production-ready, or stories that meet quality standards but fail to solve the user problem. Both must be satisfied for a story to be truly complete.

🔍 Building the DoD Checklist

Creating a Definition of Done requires collaboration. It should not be dictated by management alone. The team members who do the work must have a say in what constitutes done. This ensures buy-in and realistic expectations.

When drafting the checklist, consider the following dimensions:

1. Development Standards

Code quality is the backbone of sustainable delivery. The DoD should mandate specific coding practices to prevent future issues. Consider including the following:

- Code has been reviewed by a peer.

- Code follows the established style guide.

- No new warnings in the static analysis tools.

- Database migrations are documented and tested.

2. Testing and Quality Assurance

Testing ensures that the functionality works as intended and does not break existing systems. This is often where teams face the most resistance due to time constraints. However, skipping testing is a false economy.

- Unit tests are written and passing.

- Integration tests cover critical workflows.

- Manual testing has been performed on the feature.

- Regression testing confirms no existing features are broken.

- Accessibility standards are met.

3. Documentation

Knowledge transfer is critical for long-term maintenance. If a story is done, the knowledge regarding how it works should be accessible.

- Technical documentation is updated in the repository.

- User guides or help articles are created if applicable.

- API documentation reflects the new endpoints.

- Comments in the code explain complex logic.

4. Deployment and Operations

The software must be deployable without manual intervention or risk. Operational readiness is often overlooked until a production incident occurs.

- Configuration changes are version controlled.

- Deployment scripts are updated and tested.

- Monitoring and alerting are configured for the new feature.

- Security scans have been passed.

Teams should start with a baseline DoD and refine it over time. It is better to start with a few critical items than to create an overly burdensome list that slows down delivery without adding value.

🔄 Integrating DoD into the Workflow

Having a list of criteria is only half the battle. The team must integrate these checks into their daily workflow. If the DoD is reviewed only at the end of the sprint, it becomes a bottleneck rather than a facilitator.

Strategies for integration include:

- Task Breakdown: Break down the DoD items into sub-tasks within the user story. This ensures they are accounted for during estimation.

- Definition of Ready: Ensure stories meet the Definition of Ready before entering the sprint. This prevents stories from stalling due to missing information.

- Sprint Planning: Discuss the DoD during planning. If a story cannot meet the DoD within the sprint capacity, it should be split or moved.

- Daily Stand-up: Ask about DoD progress. If a story is blocked by a testing requirement, address it immediately.

- Sprint Review: Demonstrate the story against the DoD. If it is not done, do not count it as velocity.

Visual management tools can help track DoD compliance. If a story is in the “Done” column, it must have a green indicator showing all DoD items are checked. This visual cue reinforces the standard.

📈 Measuring Effectiveness

To know if the Definition of Done is working, the team must measure its impact. Metrics provide objective data on whether the process is improving delivery or hindering it.

Key metrics to track include:

- Carryover Rate: How many stories are moved to the next sprint because they were not marked “Done”?

- Defect Escape Rate: How many bugs are found in production? A decreasing rate suggests the DoD is effective.

- Cycle Time: The time from start to finish. If the DoD is too strict, cycle time may increase. If it is too loose, cycle time may decrease but quality suffers.

- Team Velocity: Consistent velocity indicates that the team is delivering completed work reliably.

Review these metrics during the retrospective. If the carryover rate is high, the DoD may be too ambitious for the current capacity. If defect rates are high, the DoD needs to be more rigorous.

🚧 Handling Technical Debt

Technical debt accumulates when shortcuts are taken to meet deadlines. A strong Definition of Done acts as a firewall against debt. However, sometimes debt is intentional. In these cases, it must be managed explicitly.

If a team decides to take a shortcut, they must create a follow-up task to address it later. This task should be added to the backlog with high priority. The current story cannot be marked as done if it introduces known debt that violates the DoD standards.

This approach prevents debt from becoming invisible. It ensures that the team acknowledges the trade-off and commits to repayment. Over time, this discipline reduces the interest payments on technical debt.

🗣️ Managing Resistance and Culture

Implementing a strict Definition of Done often meets resistance. Team members may feel it slows them down. Stakeholders may feel it delays releases. It is important to address these concerns with data and empathy.

Common objections and responses:

- “It takes too long.” Response: It takes longer now, but it takes less time later because we spend less time fixing bugs.

- “The customer doesn’t care.” Response: The customer cares about reliability. A buggy release damages trust.

- “We need to move fast.” Response: True velocity is sustainable speed. Breaking things slows everything down.

Culture plays a significant role here. If leadership supports the DoD, the team will adhere to it. If leadership pushes for speed over quality, the DoD will be ignored. Building a culture of quality requires consistent reinforcement from all levels.

🔄 Updating and Evolving the DoD

The Definition of Done is not static. It should evolve as the team matures and as the technology stack changes. What was sufficient for the DoD six months ago may not be sufficient today.

Guidelines for updating the DoD:

- Review Quarterly: Set a regular cadence to review the checklist.

- Listen to Feedback: Ask team members what is missing or unnecessary.

- Adopt New Standards: As new security or compliance requirements emerge, add them to the list.

- Remove Redundancy: If a test is now automated and runs in the pipeline, the manual check in the DoD might be redundant.

Evolution ensures the DoD remains relevant. A checklist that includes outdated practices becomes a hindrance. A checklist that grows with the team becomes a competitive advantage.

🌟 Impact on User Story Delivery

Ultimately, the goal is to support user story delivery. A well-crafted Definition of Done enhances this process in several ways.

- Predictability: Stakeholders know exactly what to expect when a story is marked done.

- Quality: Fewer bugs reach production, leading to higher user satisfaction.

- Confidence: The team can deploy with confidence, knowing standards are met.

- Focus: Developers can focus on building features rather than fixing integration issues later.

When the Definition of Done is respected, the entire delivery pipeline becomes smoother. Bottlenecks are reduced, and the flow of value to the customer increases. This is the true measure of success.

🏁 Final Thoughts on Quality

Building a Definition of Done is an investment in the team’s future. It requires time and effort to establish, but the returns are significant. By clearly defining what done means, teams can deliver user stories with confidence and consistency.

Start small, measure results, and iterate on the process. Avoid the temptation to skip steps for speed. Sustainable speed comes from quality. With a solid Definition of Done in place, the team is equipped to handle complex challenges and deliver value reliably.

Remember, the Definition of Done belongs to the team. It is a commitment to excellence. Honor that commitment, and the results will follow.