Designing a robust database schema is foundational to application stability. An Entity Relationship Diagram (ERD) is not merely a visual aid; it is the blueprint for how data flows, how constraints are enforced, and how the database engine manages resources. When these diagrams contain structural flaws, the consequences extend far beyond data integrity issues. They manifest as sluggish query execution times, excessive resource consumption, and persistent schema locks that halt concurrent operations.

Many development teams focus heavily on application logic while treating data modeling as a secondary concern. This oversight creates technical debt that is expensive to remediate later. The following guide details specific architectural errors found in ER diagrams and explains the technical mechanisms through which they degrade system performance.

📉 Understanding the Link Between Design and Runtime

The database engine operates on the schema definitions provided during creation. Every join, every constraint check, and every index scan is dictated by the metadata stored in the system catalog. When an ER diagram suggests a relationship that the engine cannot optimize, the system falls back to less efficient execution plans.

Consider the lifecycle of a transaction. It begins with reading data, performs logic, and ends with writing. If the underlying structure forces the engine to scan large portions of a table to verify a constraint, the transaction duration increases. Longer transactions hold locks for longer periods. Extended lock durations increase the probability of contention, leading to deadlocks and timeouts.

Key areas where design impacts runtime include:

- Join Complexity: Deeply nested relationships require multiple join operations.

- Constraint Overhead: Foreign keys and check constraints add validation steps to write operations.

- Index Utilization: Poorly defined columns prevent the query optimizer from using indexes effectively.

- Lock Granularity: Schema definitions determine whether locks are applied at the row, page, or table level.

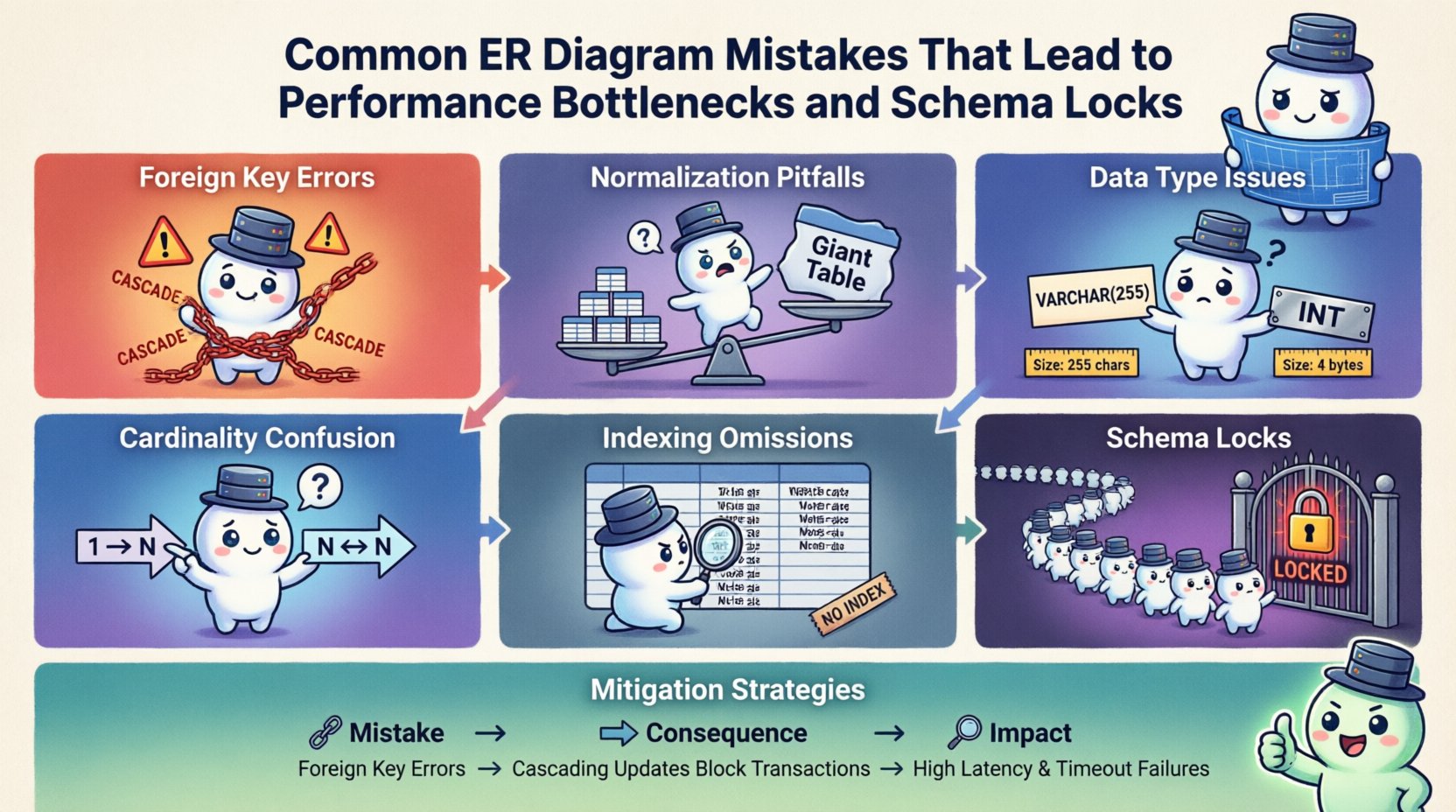

🔗 Foreign Key Configuration Errors

Foreign keys are essential for maintaining referential integrity, but their implementation in an ER diagram often introduces performance friction. The way relationships are defined dictates how the database engine handles insertions, updates, and deletions.

Missing Constraints

Defining a relationship in the ERD but omitting the actual foreign key constraint in the physical schema forces the application to manage integrity manually. This approach often leads to orphaned records. While it removes the overhead of constraint checking, it increases the risk of data corruption. More importantly, without explicit constraints, the database optimizer lacks information about relationships, potentially leading to suboptimal join strategies.

Excessive Cascade Operations

One of the most common pitfalls is enabling cascading deletes or updates across multiple levels of relationships. If a parent table has five levels of child tables with cascading actions, deleting a single row in the root triggers a chain reaction.

- Lock Escalation: The engine may escalate row locks to table locks to ensure atomicity across the cascade.

- Transaction Log Growth: Each step in the cascade generates log entries. Large cascades can fill transaction logs rapidly.

- Deadlock Risks: If two transactions attempt to cascade deletes on intersecting paths, deadlocks become highly probable.

Incorrect Referential Actions

Selecting SET NULL or NO ACTION without considering application behavior can cause unexpected failures. Conversely, CASCADE is dangerous for large datasets. The ER diagram must clearly specify the intended behavior so that the physical schema matches the business logic without imposing unnecessary runtime costs.

📊 Normalization Pitfalls

Normalization is a standard practice to reduce data redundancy. However, applying normalization rules rigidly without considering access patterns creates performance bottlenecks.

Over-Normalization

Pushing a database to Third Normal Form (3NF) or higher often results in a schema with excessive tables. While this minimizes storage, it maximizes join complexity. Every join operation requires the database engine to match keys across tables.

- I/O Overhead: Joining ten tables requires reading data from ten different storage locations.

- CPU Usage: The engine spends significant CPU cycles sorting and matching keys.

- Lock Contention: Multiple tables involved in a single query increase the surface area for locking conflicts.

Under-Normalization

Conversely, denormalizing without purpose leads to data duplication. When the same piece of information exists in multiple rows, updating it requires multiple write operations. This creates a window where data is inconsistent.

- Write Amplification: One logical update becomes multiple physical writes.

- Schema Locks: Updating redundant columns often requires locking multiple rows or even the entire table to ensure consistency.

📏 Data Type Selection Issues

The choice of data types in the ERD dictates how much space data occupies and how the engine compares values. Incorrect type selection is a silent performance killer.

Using Strings for Identifiers

Assigning VARCHAR or TEXT to primary keys or foreign keys is a frequent error. String comparisons are computationally more expensive than integer comparisons. Furthermore, strings require more storage, leading to larger pages and increased memory pressure.

- Index Size: Larger columns mean larger indexes. Full index scans become slower.

- Fragmentation: Variable-length strings increase the likelihood of page splits.

- Memory Usage: Buffer pools hold less data if rows are larger.

DateTime Precision

Using generic date types instead of specific timestamp types can lead to timezone handling issues. If the application logic expects UTC but the database stores local time, conversion overhead occurs during every query. Additionally, using DATE when TIME is needed forces the engine to process unnecessary granularity.

🔢 Cardinality Misinterpretations

Cardinality defines the numerical relationship between entities. Misinterpreting these relationships in the ERD leads to structural inefficiencies.

Confusing One-to-Many with Many-to-Many

A many-to-many relationship requires a junction table. If the ERD depicts this as a direct link, the physical schema will either be invalid or require a workaround. If the junction table is missing, the database must perform complex queries to resolve relationships, often resulting in cross joins or nested loops.

Ignoring Optional Relationships

Defining a mandatory relationship (1:1 or 1:N) when the data is actually optional introduces unnecessary constraints. This forces the application to provide a value even when it is irrelevant, or it forces the database to check for nulls constantly.

🔍 Indexing Omissions

An ERD should ideally indicate which columns are candidates for indexing. However, designers often omit this information, assuming the database will handle it automatically.

Missing Foreign Key Indexes

When a foreign key column is not indexed, the database must perform a full table scan to verify the constraint during every insert or update. This is a critical bottleneck for write-heavy workloads.

- Write Latency: Every write operation waits for the index lookup.

- Lock Duration: Longer scans mean locks are held longer.

Composite Index Misalignment

Designing indexes without considering the query patterns leads to wasted space. If queries frequently filter by column A and sort by column B, the index should reflect that order. Reversing the order renders the index useless for sorting.

🔒 Schema Lock Mechanisms Explained

Schema locks occur when the database engine prevents changes to the structure while operations are in progress. Poor design increases the frequency and duration of these locks.

DDL vs DML Contention

Schema Definition Language (DDL) operations, such as adding a column, often require an exclusive lock on the table. If the schema is dense with constraints and indexes, these operations take longer. During this time, all Data Manipulation Language (DML) operations are blocked.

Lock Escalation

Database engines automatically escalate locks from rows to tables to manage memory. Complex ER diagrams with wide tables and many relationships increase the number of rows involved in a transaction, triggering escalation sooner.

Metadata Locks

Every query references metadata. If the ERD defines complex views or stored procedures, the metadata dependency graph grows. Concurrent DDL operations can conflict with long-running queries that reference the same objects, causing metadata lock waits.

🛠 Common Mistakes vs. Consequences

| Design Mistake | Direct Consequence | Performance Impact |

|---|---|---|

| Missing Foreign Key Index | Full Table Scan on Write | High Latency, Increased CPU |

| Excessive Join Depth | Complex Execution Plan | Slow Reads, High Memory Usage |

| String Primary Keys | Large Index Size | More I/O, Cache Misses |

| Cascade Delete on Deep Hierarchy | Lock Escalation | Table Locks, Deadlocks |

| Redundant Data Storage | Multiple Write Operations | Write Amplification, Consistency Risks |

| Incorrect Cardinality | Invalid or Complex Joins | Query Failures, Slow Resolution |

✅ Mitigation Strategies

Correcting these issues requires a systematic approach to schema design. The following steps help align the ER diagram with performance goals.

1. Profile Access Patterns

Before finalizing the diagram, analyze how data will be queried. Identify the most common read and write paths. Design indexes and relationships to support these specific paths rather than theoretical use cases.

2. Limit Cascade Depth

Avoid cascading deletes beyond two levels. Implement application-level logic for deep hierarchy cleanup to allow for better transaction control and error handling.

3. Enforce Indexing Rules

Establish a standard that all foreign key columns are indexed. Do not rely on the database to guess the intent. Explicitly define indexes in the schema definition phase.

4. Standardize Data Types

Use fixed-length types for IDs. Use INT or BIGINT for keys. Use DECIMAL for financial data. Avoid generic types where specific precision is required.

5. Review Locking Behavior

Test the schema under load. Simulate concurrent writes to observe lock escalation. Adjust isolation levels and transaction sizes if deadlocks occur frequently.

🧪 Review and Validation

Validation is not a one-time event. As the application evolves, the schema must adapt.

- Static Analysis: Use tools to scan the schema for anti-patterns, such as missing indexes or circular dependencies.

- Query Analysis: Review slow query logs to identify joins that are not utilizing indexes efficiently.

- Lock Monitoring: Monitor for lock waits and deadlocks in production. These are direct indicators of schema design flaws.

🔄 Maintenance Considerations

Schemas are not static. They change over time. Designing for maintainability reduces the friction of future changes.

Versioning Changes

Track schema changes in version control. This allows teams to review the impact of a change before deployment. Small, incremental changes are less likely to cause schema locks than massive refactors.

Backward Compatibility

When altering a column, consider the impact on existing queries. Changing a data type or constraint can invalidate cached plans. Use online schema modification techniques where available to minimize downtime.

Documentation Alignment

Keep the ER diagram synchronized with the physical schema. Discrepancies between the design and the reality lead to confusion and deployment errors. Automated synchronization tools can help maintain this alignment.

📝 Final Technical Summary

The performance of a database system is inextricably linked to the quality of its ER diagram. Every relationship, data type, and constraint defined in the design phase propagates through the entire stack of the application. Ignoring these connections results in systems that are slow, unstable, and difficult to scale.

By avoiding common pitfalls such as missing indexes, over-normalization, and improper cascade behaviors, architects can build systems that handle load efficiently. The goal is not just data integrity, but also predictable runtime behavior. Regular reviews of the schema against actual usage patterns ensure that the design remains effective as requirements evolve.

Focusing on these technical details during the modeling phase saves significant engineering effort during the production phase. A well-structured ERD acts as a safeguard against the most common causes of database degradation. It ensures that the foundation of the application is solid, allowing the business logic to operate without interference from underlying infrastructure constraints.

Continuous monitoring and iterative refinement are necessary to maintain this state. As data volumes grow, the cost of a bad design decision increases exponentially. Therefore, investing time in precise ER diagram creation is a critical component of system reliability.