In the world of agile development, the focus often falls heavily on functional requirements. We ask, “What does the system do?” and “How does the user interact with it?”. While these questions drive feature delivery, they often leave a critical gap: how well does the system perform its duties?. This gap is where Non-Functional Requirements (NFRs) live. Ignoring them leads to technical debt, slow systems, and frustrated users.

This guide explores how to integrate quality attributes directly into your user stories. By treating quality as a feature rather than an afterthought, teams can build robust, reliable, and scalable software without sacrificing speed.

Understanding the Difference 🧠

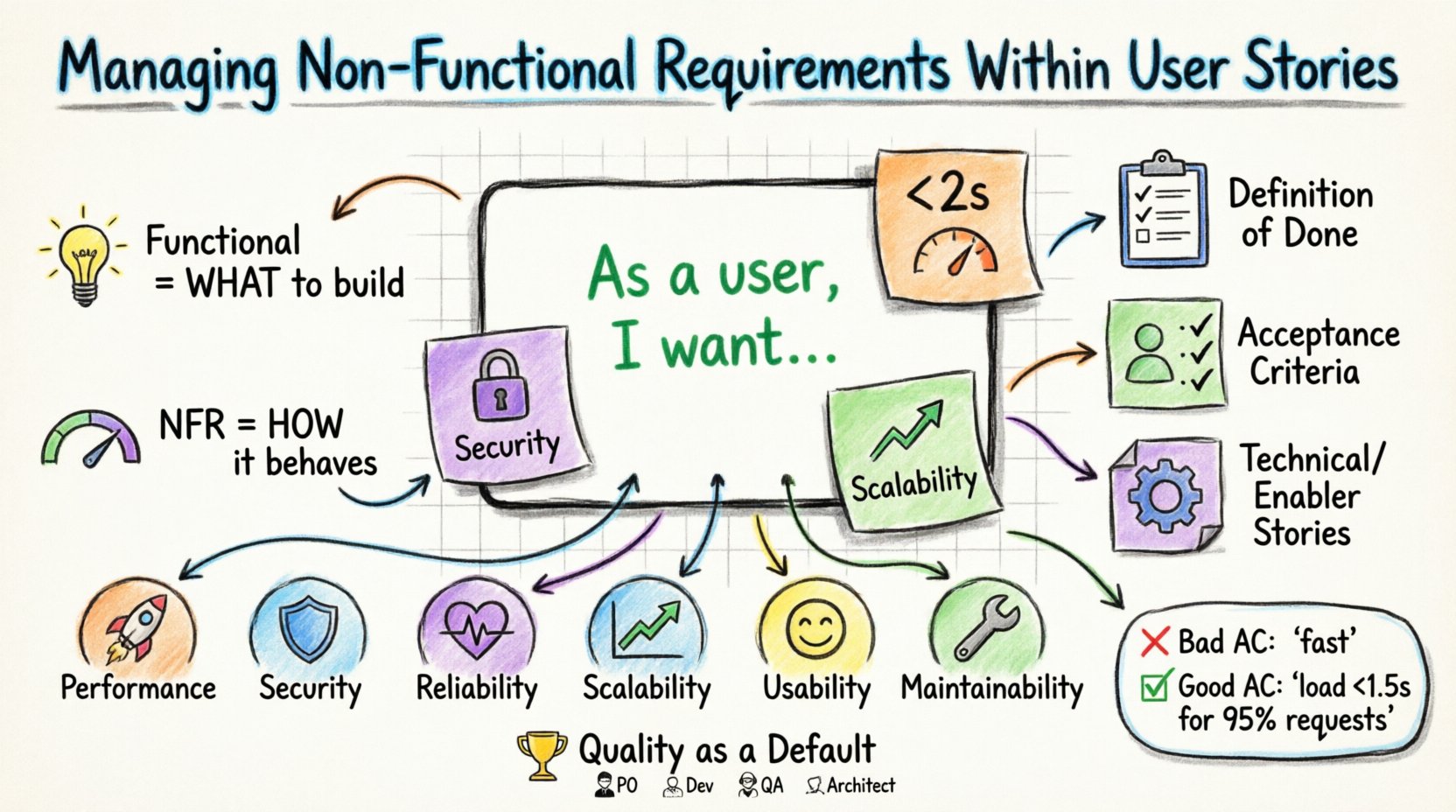

Before diving into integration, we must define the terms. A User Story describes functionality from the user’s perspective.

- Functional Requirement: Defines behavior. Example: “As a user, I want to reset my password.”

- Non-Functional Requirement: Defines constraints and qualities. Example: “The password reset link must expire in 15 minutes.” or “The page must load in under 2 seconds.”

Functional requirements tell you what to build. Non-functional requirements tell you how it should behave. When these are separated, NFRs often get pushed to the end of a sprint or ignored entirely. This results in a “works but is slow” or “works but is insecure” product.

Why NFRs Get Neglected ❌

Understanding why teams struggle with NFRs helps prevent the issue.

- Invisible Value: Users rarely complain about performance until it becomes too slow. They notice when a feature is missing, but they often tolerate poor quality for a while.

- Technical Complexity: Developers prefer building new features. Testing load times or security protocols requires specialized effort that feels disconnected from the user story.

- Vague Definitions: Terms like “fast” or “secure” are subjective. Without metrics, acceptance criteria cannot be met objectively.

- Siloed Teams: Architects design the system, but Product Owners define the stories. If they do not communicate, quality standards slip through the cracks.

Strategies for Integration 🛠️

There are three primary methods to ensure NFRs are addressed during development. Using these methods ensures quality is baked into the process.

1. The Definition of Done (DoD) 🏁

The Definition of Done is a checklist that applies to every user story. It ensures consistency across the backlog. Instead of writing a separate ticket for security, you include security checks in the DoD.

- All code must pass static analysis.

- All unit tests must pass.

- Code review must be completed by at least two peers.

- NFR Check: Does the feature meet the performance baseline?

- NFR Check: Has accessibility compliance been verified?

This approach prevents a story from being marked “Complete” until quality standards are met. It distributes the responsibility across the entire team.

2. Embedding in Acceptance Criteria ✅

Some NFRs are specific to a single feature. These belong in the Acceptance Criteria section of the User Story. This makes the quality requirement visible and testable for that specific story.

Example Story: As a shopper, I want to filter products by price range.

Functional Criteria: Slider adjusts price range; results update dynamically.

NFR Criteria: Filter results must appear within 500ms of slider movement.

By placing this in the criteria, the developer knows exactly what performance metric to optimize. The tester knows exactly what to measure.

3. Independent NFR Stories 📋

Occasionally, an NFR is too large to fit into a single functional story. If improving the database architecture is required to support a new feature, it might need its own ticket. This is often called a Technical Story or Enabler Story.

- When to use: Refactoring code, upgrading infrastructure, or implementing a new security framework.

- Goal: These stories provide the capacity to deliver future functional stories faster and safer.

- Balance: Do not let technical stories dominate the backlog. They should enable business value, not exist in isolation.

Key Categories of Non-Functional Requirements 📊

Not all NFRs are created equal. Below is a breakdown of the most critical categories and how to handle them.

| Category | Question to Ask | Example Metric |

|---|---|---|

| Performance | How fast does it respond? | Page load < 2 seconds |

| Security | Is data protected? | End-to-end encryption required |

| Reliability | How often does it fail? | 99.9% uptime availability |

| Scalability | Can it handle growth? | Support 10k concurrent users |

| Usability | Is it easy to use? | Task completion rate > 90% |

| Maintainability | Is code easy to change? | Cyclomatic complexity < 10 |

Deep Dive: Performance ⚡

Performance NFRs are often the most visible to users. Slow systems lead to abandonment. To manage these:

- Set Baselines: Use existing system metrics as a baseline. If the old system took 3 seconds, the new one should take less, not more.

- Define Thresholds: Distinguish between “acceptable” and “critical”. A 200ms delay might be fine for a report, but unacceptable for a real-time chat.

- Automate Monitoring: Integrate performance tests into the Continuous Integration pipeline. If a commit degrades speed, the build should fail.

Deep Dive: Security 🔒

Security is not a feature; it is a prerequisite. However, specific security needs arise with features.

- Authentication: Does the story require Multi-Factor Authentication?

- Data Privacy: Does the feature store personal identifiable information? If so, how is it masked or encrypted?

- Audit Trails: Should actions be logged for compliance?

Ensure developers know which data classification applies to the new feature. This dictates the level of protection required.

Deep Dive: Scalability 📈

Scalability concerns how the system grows. This is often an architectural decision.

- Vertical vs. Horizontal: Does the feature require more power on a single server, or more servers?

- Bottlenecks: Identify where the load increases. Is it the database? The API? The frontend rendering?

- Future Proofing: Ask, “Will this work if traffic doubles next month?” If the answer is no, the story needs a scalability component.

The Role of Acceptance Criteria 📝

The Acceptance Criteria (AC) is the contract between the business and the team. It defines success. NFRs must be written as testable AC.

Bad Example

AC: The system should be fast.

Problem: “Fast” is subjective. One person’s fast is another’s slow.

Good Example

AC: The search results page must load within 1.5 seconds for 95% of requests.

Benefit: This is measurable. A test can pass or fail based on this number.

Tips for Writing NFR Acceptance Criteria

- Use Numbers: Quantify everything possible (time, count, size).

- Use Conditions: Specify under what conditions the metric applies (e.g., “on a 4G connection”).

- Define Failure: Clearly state what happens if the NFR is not met.

Testing Non-Functional Requirements 🧪

Functional testing verifies behavior. NFR testing verifies quality. Both are necessary.

- Unit Tests: Developers write these to verify logic. They do not typically measure performance.

- Integration Tests: Verify that components work together. Good place for API latency checks.

- Load Testing: Simulate user traffic. Essential for performance and scalability stories.

- Security Scanning: Automated tools can scan code for vulnerabilities. Manual penetration testing may be required for sensitive features.

- Accessibility Testing: Automated tools check contrast and structure. Manual testing with screen readers verifies real-world usability.

Do not rely solely on developers to test NFRs. Quality Assurance engineers should be involved in planning to ensure test environments support the required load or configurations.

Collaboration and Communication 🤝

Managing NFRs is a team sport. It requires input from various roles.

Product Owner

- Prioritizes stories that improve quality.

- Ensures the backlog reflects business risks (e.g., security compliance).

- Defines the “value” of a fast system versus a slow one.

Development Team

- Identifies technical constraints during refinement.

- Proposes architectural changes to meet NFRs.

- Executes the code to meet the metrics.

Quality Assurance

- Designs tests for NFRs (e.g., load scripts).

- Validates that metrics are met before release.

- Reports regressions in quality metrics.

Architecture / Technical Leads

- Set the standards for maintainability and security.

- Review designs to ensure scalability.

- Advise on trade-offs when business speed conflicts with technical quality.

Common Pitfalls to Avoid 🚫

Avoid these mistakes to maintain a healthy balance between features and quality.

- Over-Engineering: Building for 1 million users when you have 100. This wastes time. Size the NFRs to the current context, with room to grow.

- Ignoring Legacy: New features often interact with old code. NFRs must consider the impact on the existing system.

- Waterfall Mindset: Do not wait until the end of the project to test performance. Test incrementally.

- Ignoring UX: Performance NFRs matter, but so does Usability. A fast site that is confusing is still a failure.

Measuring Success 📉

How do you know if your NFR management is working? Track these metrics over time.

- Lead Time: Are NFR stories slowing down delivery? If so, refine the criteria.

- Defect Rate: Are bugs related to performance or security decreasing?

- Customer Satisfaction: Are users reporting fewer complaints about speed or crashes?

- Build Stability: Are fewer builds failing due to quality gates?

Continuous improvement relies on data. Review these metrics in retrospectives to adjust your approach.

Practical Example: A Login Feature 🔐

Let’s look at a complete User Story that includes NFRs.

Story

Title: Secure User Login

Description: As a registered user, I want to log in securely so that I can access my account.

Acceptance Criteria

- Functional: User enters email and password. System validates credentials. Redirect to dashboard on success.

- Functional: System blocks access if credentials are incorrect.

- NFR (Security): Passwords must be hashed using industry-standard algorithms. Session tokens must expire after 30 minutes of inactivity.

- NFR (Performance): Login response time must be under 1 second.

- NFR (Security): Account must lock after 5 failed attempts to prevent brute force attacks.

- NFR (Accessibility): Login form must be navigable via keyboard only.

Notice how the NFRs are specific and testable. They are not an afterthought. They are part of the definition of success.

Handling Technical Debt 💣

Even with the best planning, technical debt accumulates. This happens when NFRs are compromised to meet deadlines.

- Track It: Explicitly log technical debt in the backlog. Do not hide it.

- Refactor Regularly: Dedicate a portion of every sprint to improving code quality. This is often called a “Refactoring Sprint” or “Quality Sprint”.

- Pay Down Debt: When a story requires significant debt to be completed, allocate time to fix the debt alongside the feature.

- Prevent New Debt: Enforce the DoD strictly. Do not allow debt to accumulate if you can avoid it.

Ignoring technical debt is like ignoring interest on a loan. It grows until it becomes unpayable. Proactive management of NFRs keeps the debt manageable.

Conclusion: Quality as a Default 🏆

Integrating Non-Functional Requirements into User Stories is not about adding bureaucracy. It is about aligning technical execution with user expectations. When performance, security, and reliability are treated as explicit requirements, the resulting software is more stable and valuable.

By using the Definition of Done, writing measurable Acceptance Criteria, and fostering collaboration across roles, teams can deliver high-quality features consistently. The goal is not perfection, but continuous improvement. Every story is an opportunity to build a better system. Treat quality as a core component of your product, and your users will notice the difference.

Start by reviewing your next sprint backlog. Identify where NFRs are missing. Add them. Test them. Improve them. The system will thank you.